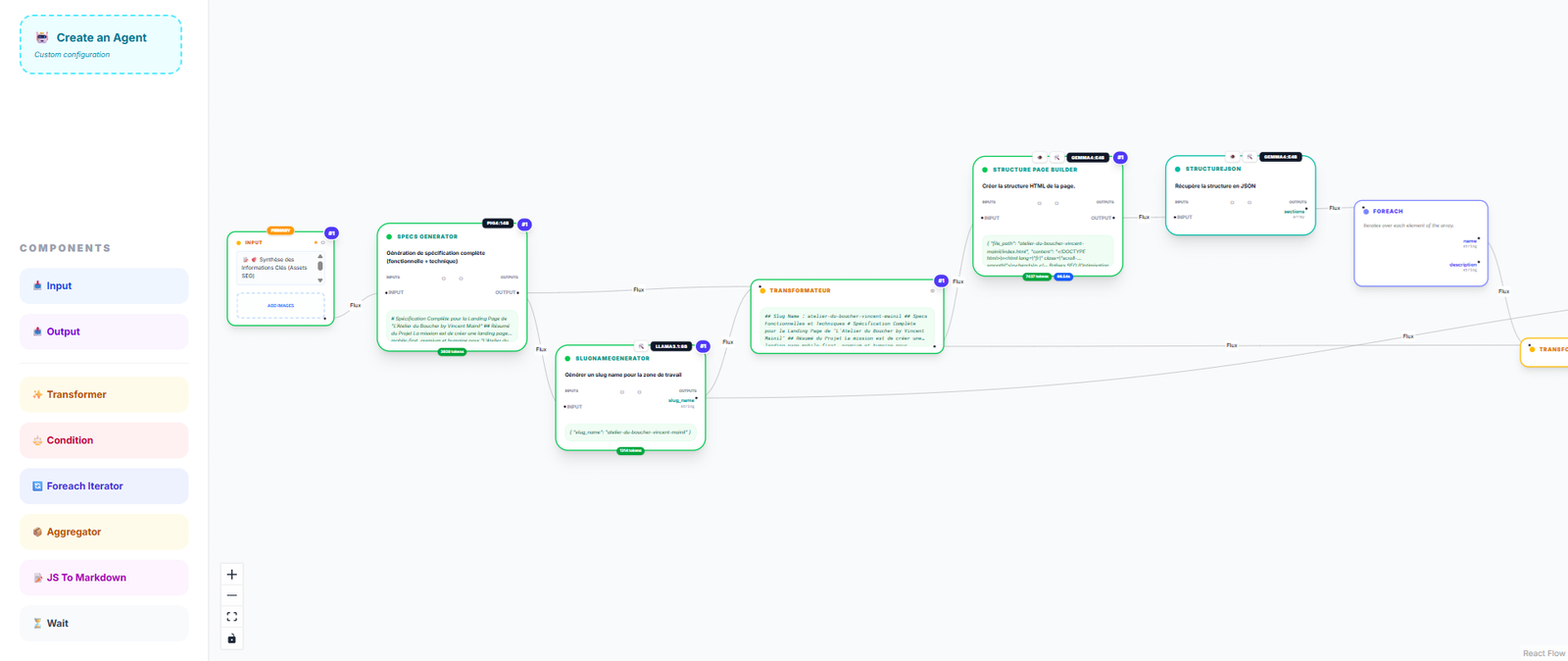

Beyond Simple Prompts:

Visual Orchestration

Native Loops & Logic

Build complex business logic with Foreach loops, conditions, and aggregators without glue code.

Human-in-the-Loop

The ask_tool_ui node allows agents to pause and ask for more precision or details before proceeding.

Native Tool Calling

Empower agents with system tools, custom tools, and specialized skills. Manual selection per agent ensures minimal context overhead and maximum precision.

Workflow Integrated RAG

Seamlessly inject local context into any node using local embeddings (BGE-Small) and PolarisDB.